What Beacon checks

Beacon evaluates your server and any MCP Apps it exposes against the specifications and platform requirements that ChatGPT and Claude.ai apply when reviewing an app. MCP server specs — protocol conformance and tool/resource shape: MCP Apps specs — requirements that apply once your server ships an MCP App (a view resource rendered by the client):- Apps SDK reference — ChatGPT Apps requirements (metadata, CSP, widget descriptions)

- Remote MCP Server Submission Guide — Claude.ai-specific requirements for remote MCP Apps

ChatGPT / Claude.ai) derived from the checks that apply to each platform.

Beacon currently only supports unauthenticated MCP servers. If your server responds with

HTTP 401, Beacon reports

that authentication is required and skips the rest of the checks. Support for authenticated audits is on the roadmap.Running an audit

From the dashboard

Open your team’s Beacon tab, paste an HTTPS URL, and hit Run. The page streams progress as each collector finishes and opens a detailed report when the audit completes. Past audits for the team are listed so you can revisit or compare them.

From the CLI

Usealpic audit to run Beacon from your terminal or CI pipeline:

--json to get the full report for further processing in CI.

The CLI skips the end-to-end app rendering category by default because it takes several minutes per platform. Run the

full audit (including live app rendering) from the dashboard.

Reading the report

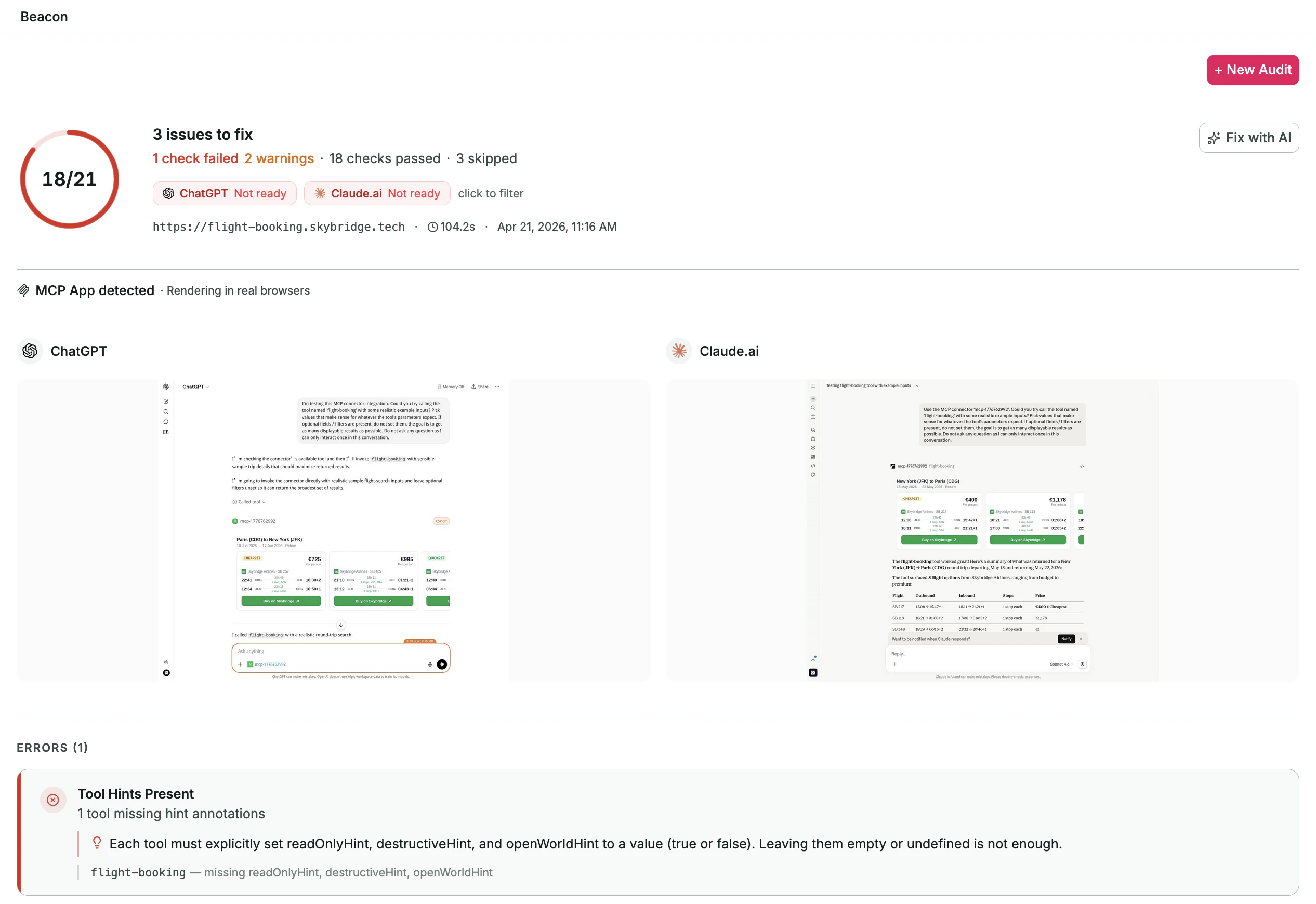

The report groups results by severity and surfaces:- A readiness badge for ChatGPT and Claude.ai — green when no blocking errors remain and, for servers that ship an MCP App, at least one app check succeeded for that platform.

- A list of issues with a short message, affected tool/resource, and a one-line hint explaining how to fix it.

- App screenshots captured from the real ChatGPT and Claude.ai browser sessions Beacon ran, so you can visually confirm what your MCP App looks like in each client.

- A Fix with AI action that packages the failing checks into a prompt you can paste into Claude Code, Cursor, or any other coding agent. The prompt references the same specs listed above so the agent can reason against the source of truth.

skipped, it’s because its required artifact wasn’t available — for example, a tool-level check has no tools to run against, or an app-rendering check has no MCP App resources to render. Skips are informational and never block platform readiness.